AscendKernelGen/KernelGen-LM-1.7B

KernelGen-LM-1.7B is a state-of-the-art domain-adaptive large language model specialized for low-level NPU kernel generation, specifically for the Huawei Ascend architecture using the AscendC programming language. Built upon the Qwen3-1.7B backbone, it is trained on the Ascend-CoT dataset and refined via reinforcement learning with execution feedback.

Resources:

- Paper: AscendKernelGen: A Systematic Study of LLM-Based Kernel Generation for Neural Processing Units

- GitHub Code: weich97/NPUKernelBench

- Datasets: Ascend-CoT

Introduction

Our framework, AscendKernelGen (AKGen), bridges the gap between general-purpose code generation and hardware-specific programming through a closed-loop system of data construction, training, and evaluation. Key innovations include:

- Ascend-CoT Dataset: A high-quality, domain-specific dataset incorporating Chain-of-Thought (CoT) reasoning. It combines documentation-based reasoning, code-centric reasoning derived from real-world kernel implementations, and general reasoning chains to capture the structured logic required for low-level NPU programming.

- Domain-Adaptive Post-Training: A two-stage optimization process that yields KernelGen-LM. We first employ Supervised Fine-Tuning (SFT) with error-derived supervision (correcting API misuse and numerical errors). This is followed by Reinforcement Learning (RL) using Direct Preference Optimization (DPO), driven by execution-based correctness and performance signals.

- Hardware-Grounded Evaluation: Validated using NPUKernelBench, a comprehensive benchmark that assesses compilation success, functional correctness, and performance (latency) on real Ascend hardware across varying complexity levels.

- Performance: The model demonstrates significant improvement on complex Level-2 kernels compared to baselines, effectively solving tasks where general-purpose models (like Qwen3, Llama3.1) fail completely.

Representative Case Studies

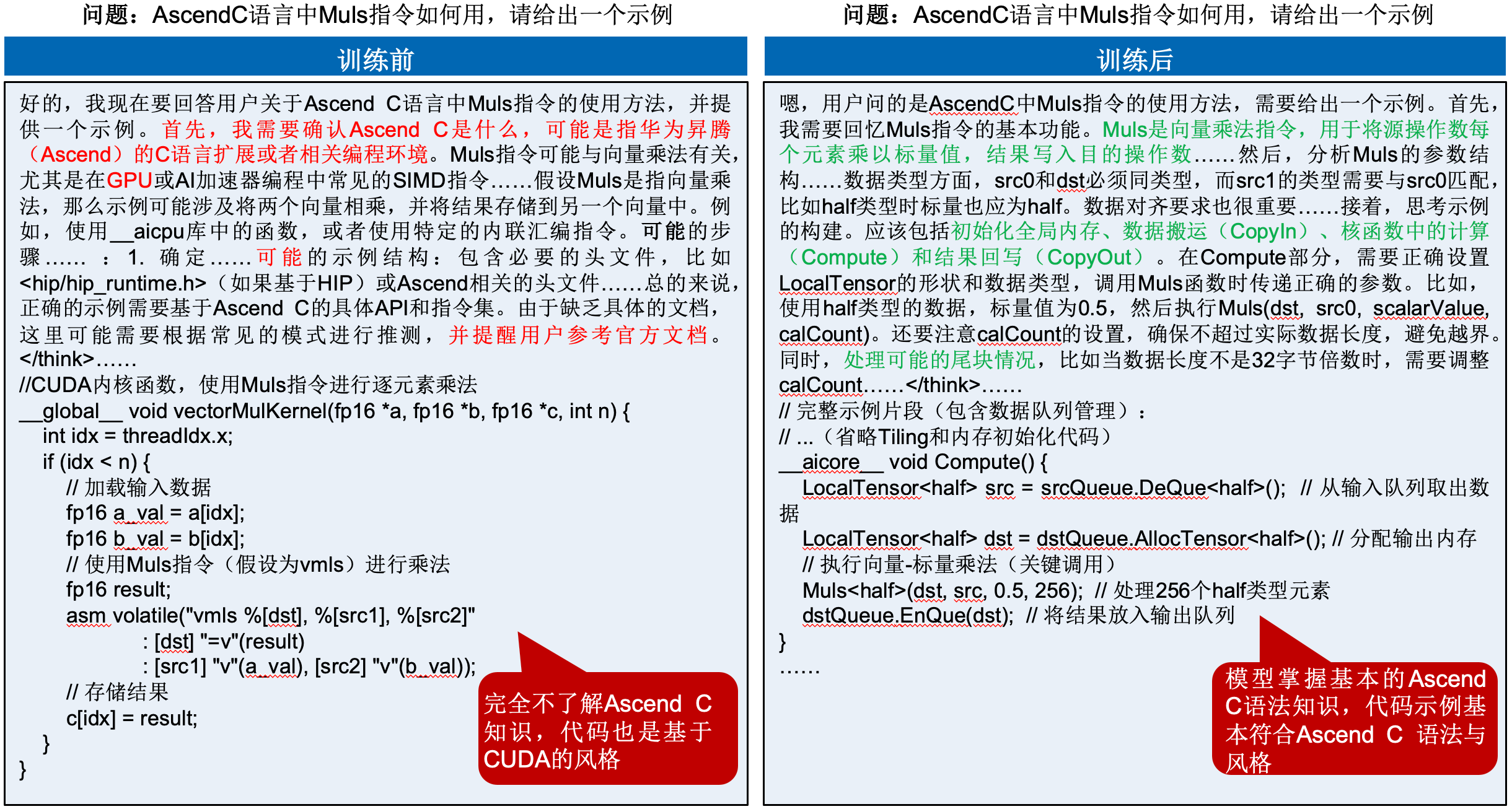

Example: Effect of Knowledge Injection

The following case study, which concerns the usage of the Muls instruction in AscendC, demonstrates the qualitative improvement brought by CoT-based domain knowledge injection in both professional knowledge comprehension and code generation capability.

🤔 Original response (before training):

The response exhibits substantial uncertainty, as indicated by expressions such as “possibly” and “assuming.” The model attempts to reason by analogy from general programming knowledge, yet lacks an accurate understanding of AscendC-specific APIs, the precise usage of the Muls instruction, and its architectural context within the Ascend processor. The provided code example (e.g., involving hip/hip_runtime.h) is incorrect and explicitly reveals the model’s lack of domain-specific knowledge, as evidenced by statements such as “insufficient documentation” and “cannot be implemented.”

🎓 Improved response (after training):

The response demonstrates clear expert-level reasoning.

- 🧠 Structured reasoning (

<think>): The model first analyzes theMulsinstruction in terms of its operational background (vector multiplication), key parameters (e.g.,src0,dst), and essential data layout constraints (e.g., whethersrc0must be a scalar or allocated in a specific region). - ✅ Accurate implementation: It then provides correct and concrete implementation steps, including initialization, local tensor memory management through

InQueueandOutQueue, data movement (CopyIn), and the core computation invocation (ScalarValue). - ⌨️ Code correctness: The final generated code example (

aicore__void Compute()) correctly employs the AscendC API and demonstrates an accurate understanding of both data queuing and vector instructions.

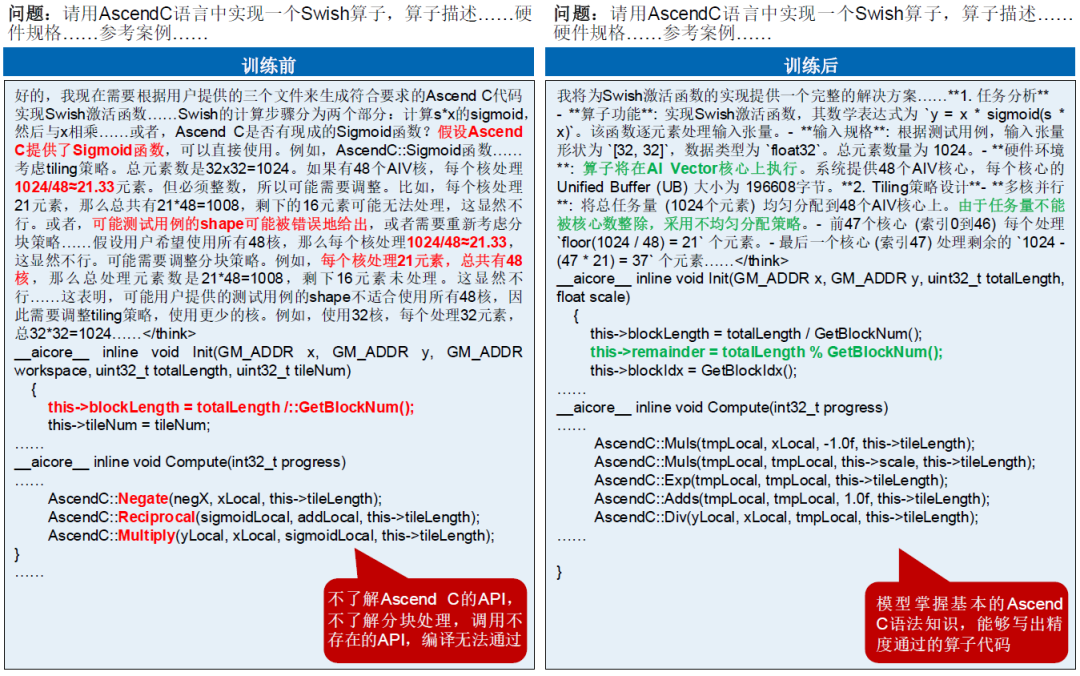

Example: Comparison of Generated Operators

The following example, which concerns the implementation of a Swish operator in AscendC, illustrates the qualitative improvement brought by CoT-based domain knowledge injection in handling complex operator implementation and tiling strategy design.

🤔 Original response (before training):

The response exhibits substantial uncertainty in the critical tiling strategy, with phrases such as “possibly,” “obviously not feasible,” and “assuming.” Although the model attempts a simple arithmetic division (1024/48 = 21.33), it fails to understand Ascend core scheduling mechanisms and cannot handle non-divisible remainders appropriately. Its implementation of the Swish operator itself is also confused, with an inaccurate composition of operations such as Negate, Reciprocal, and Multiply, and an imprecise understanding of the required APIs. The response ultimately concludes that compilation would fail.

🎓 Improved response (after training):

The response demonstrates clear expert-level reasoning.

- 🧠 Structured reasoning (

<think>): The model first accurately analyzes the mathematical formulation of theSwishoperator, namely $y = x \times \mathrm{sigmoid}(x)$, together with the input specification. More importantly, it designs a robust tiling strategy by correctly identifying the total workload (1024) and the number of cores (48), and by formulating an uneven remainder-aware workload distribution scheme (the first 47 cores process 21 elements each, while the last core processes 37 elements). - ✅ Accurate implementation: The model then correctly realizes this tiling strategy in the

Init()function by computingblockLengthandremainder. In theCompute()function, it provides a correct and concrete operator implementation pipeline, combining AscendC instructions such asMuls,Exp,Adds, andDivto construct the sigmoid function step by step and ultimately complete theSwishcomputation. - ⌨️ Code correctness: The final generated code examples (

aicore__void Init()andaicore__void Compute()) correctly employ the AscendC API, demonstrating not only an accurate understanding of the operator’s mathematical logic, but also a deep mastery of multi-core parallelization and tiling strategies.

Citation

@article{cao2026ascendkernelgen,

title={AscendKernelGen: A Systematic Study of LLM-Based Kernel Generation for Neural Processing Units},

author={Xinzi Cao and Jianyang Zhai and Pengfei Li and Zhiheng Hu and Cen Yan and Bingxu Mu and Guanghuan Fang and Bin She and Jiayu Li and Yihan Su and Dongyang Tao and Xiansong Huang and Fan Xu and Feidiao Yang and Yao Lu and Chang-Dong Wang and Yutong Lu and Weicheng Xue and Bin Zhou and Yonghong Tian},

journal={arXiv preprint arXiv:2601.07160},

year={2026},

url={https://arxiv.org/abs/2601.07160}

}

- Downloads last month

- 807